@openprivacy, glad to help! to try and answer your questions:

Regarding Still Images

We always render to still sequences, then composite them together in adobe after effects. This has a couple benefits:

-

multiple processes can render different parts of the animation simultaneously without having to merge any frames back into a single movie file.

-

lets you render to much higher quality image formats, like 32bit floating point .exr (for when we do work for film), or 16 bit PNG (if we’re doing standard HD work). You can do some googling on “Linear Color Workflow”. This is the process of using 32 bit floating point imagery throughout the film creation process, so that color correction and other post-operations gives you as much color data to work with as possible. If you are familiar at all with RAW image formats that a lot of DSLR cameras save to, this is pretty much the same thing. Rendering to a 32bit .exr format is like creating a “RAW” image that lets you manipulate that in post in ways that would be impossible with standard formats.

But, if compositing software like after effects or Nuke isn’t available to you, there’s a number of ways you can convert image sequences into movie files. Actually, you could fire up blender and use it as a post tool with its compositing features and combine the frames and export out to a movie file.

If you just have one GPU for now, there’s no reason you can’t just render directly to quicktime or something like that too. It all depends on what the plans are for the rendered frames; If you plan to do some compositing, or other post work, still sequences are the way to go. If you just want to spit out your renders and use as-is from Blender, and have just one render process running at one time, then just render directly to quicktime.

Regarding the Python Script

As you caught above, i’ve got the python script up there in my first post, and example usage of how to fire up blender on that script. For redundancy, to start a render job from the command line, while using the python script, you would type this out on the cli:

$ blender -b file.blend -P the_script.py -s 1 -e 10 -a &

This will launch blender in the background, using the python script as “start up” instructions, open file.blend, and begin rendering frames 1 through 10. The python startup instructions above are what tells blender to use the GPU instead of the CPU for rendering. And, when you have multiple cards, you have some options in how you want to use those cards. If you look at the last line of the python example, you see:

bpy.context.user_preferences.system.compute_device = ‘CUDA_0’

Since i have 4 GPUs, this tells blender to use just the first card in the CUDA array for the job, and ignore the other three. CUDA_1 would be the second card in the array, CUDA_2 being the third card in the array, and so on. CUDA_MULTI_0 will tell blender to use all four cards together as one massive card.

Regarding -a VS -P

You need both on the cli. -a just tells blender you are asking it to rendering an animation, and corresponding -s (start frame) and -e (end frame) are also to be provided. -f can be used instead of -a to render a single frame. So, say you wanted just a single frame render using the GPU:

$ blender -b file.blend -P the_script.py -f 5 &

This will render just frame 5 of file.blend, using the python startup instructions.

Regarding CPU

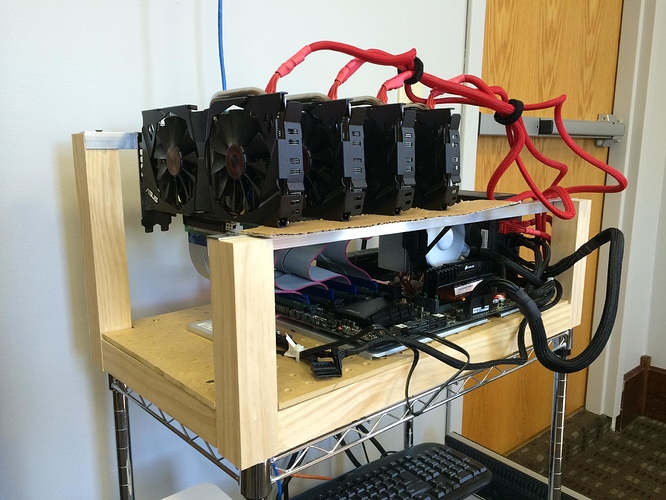

Actually, what i’ve noticed on my machine, is that each GPU will use up exactly one entire thread on the CPU, pegging @ 100% while rendering. So, using HTOP on linux, I can see that there are 4 of the 8 threads pegged to 100% on my i7, the other 4 threads are completely dead, 0% utilization. I’ve also tested this with just two cards in use during rendering, and only two corresponding threads were pegged to 100%. So, with this I think that an i7 might even be overkill for a GPU machine. You just want to make sure that you have enough threads on the CPU for the number of GPUs you plan to run. The chip itself doesn’t need to be that beefy since the computation happens on the GPU. The CPU is just there to catch the results from the GPU and do its thing with converting the results into image data and saving to disk, etc.

970 Being “Old”

Its certainly not “old” since these are being sold still quite a bit, but when it comes to linux drivers, nvidia seems to only support the absolute latest and greatest cards (because they want you to keep buying the latest and greatest). In order to get CUDA working properly with my 970s in Ubuntu Server 14.04 LTS, it took me some trial and error in finding specific driver numbers and downloading archived run packages from NVIDIA. I think v346.72 is what I landed on for this system.

Now, card choice I’ve found has boiled down to three things for me: Video Memory, CUDA core count, and Linux Driver support. Get the card with the greatest number of CUDA cores and memory you can afford and can efficiently power. Most GTX cards draw somewhere in the 200-240 watts of power per card. The 970 draws in the 140’s (last i checked, but typing from memory), which allowed me to put 4 cards on the same PSU as the rest of the system.

Regarding RAM

Memory is important for large blend scenes, but for rendering on the GPU, its actually the video memory in your GPU that is used for this process. The video memory on the card is the “RAM” that buffers texture and polygon data during rendering. My 970’s each have 3GB of video memory, which means each card can hold up to 3GB of texture and mesh data during rendering. If you’ve got billions of polygons and gigs of texture images in a single render, you’ve got to take this into consideration. Low poly scenes that have only a few meg of textures aren’t really a threat to most card’s memory sizes. Traditional RAM is used when rendering on the CPU, and is pretty trivial during rendering. RAM, whether its in the GPU or on the MOBO, is just there to stash and cache texture data mostly. So you just have to have enough memory to hold however much your using in your scene.

Regarding _cycles

When you startup blender on the command line with a startup script (-P), _cycles is a python module that is made available to your python script, since it lives with the blender runtime. No extra steps or installations are required to make that work. It is specific to blender though, and _cycles is only available to blender startup scripts. I’m sure there’s probably some way in python to get at the _cycles module externally, but it does live with the python version bundled with blender.

Regarding your Son

FRIGGIN AWESOME!!!